Learning in extended reality

2.5

2.5 billion course registrations power limitless potential

125

125 million users chart their own path with always-on learning

3

Every 3 seconds someone takes a course in Cornerstone

Deliver the outcomes that matter most to your people and your organization

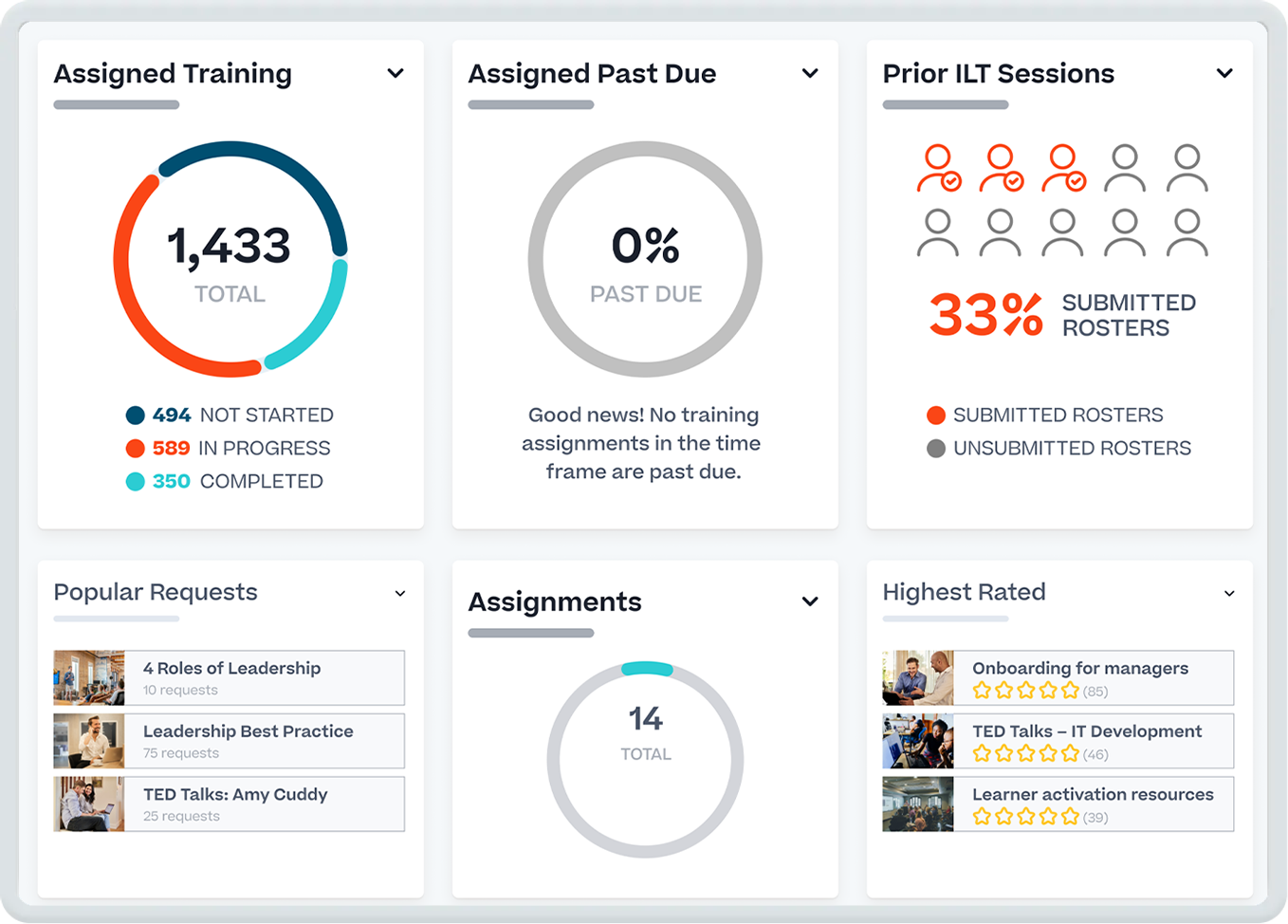

Empower your people to work more effectively

Deliver, manage, and track global training for your workforce, customers, and partners.

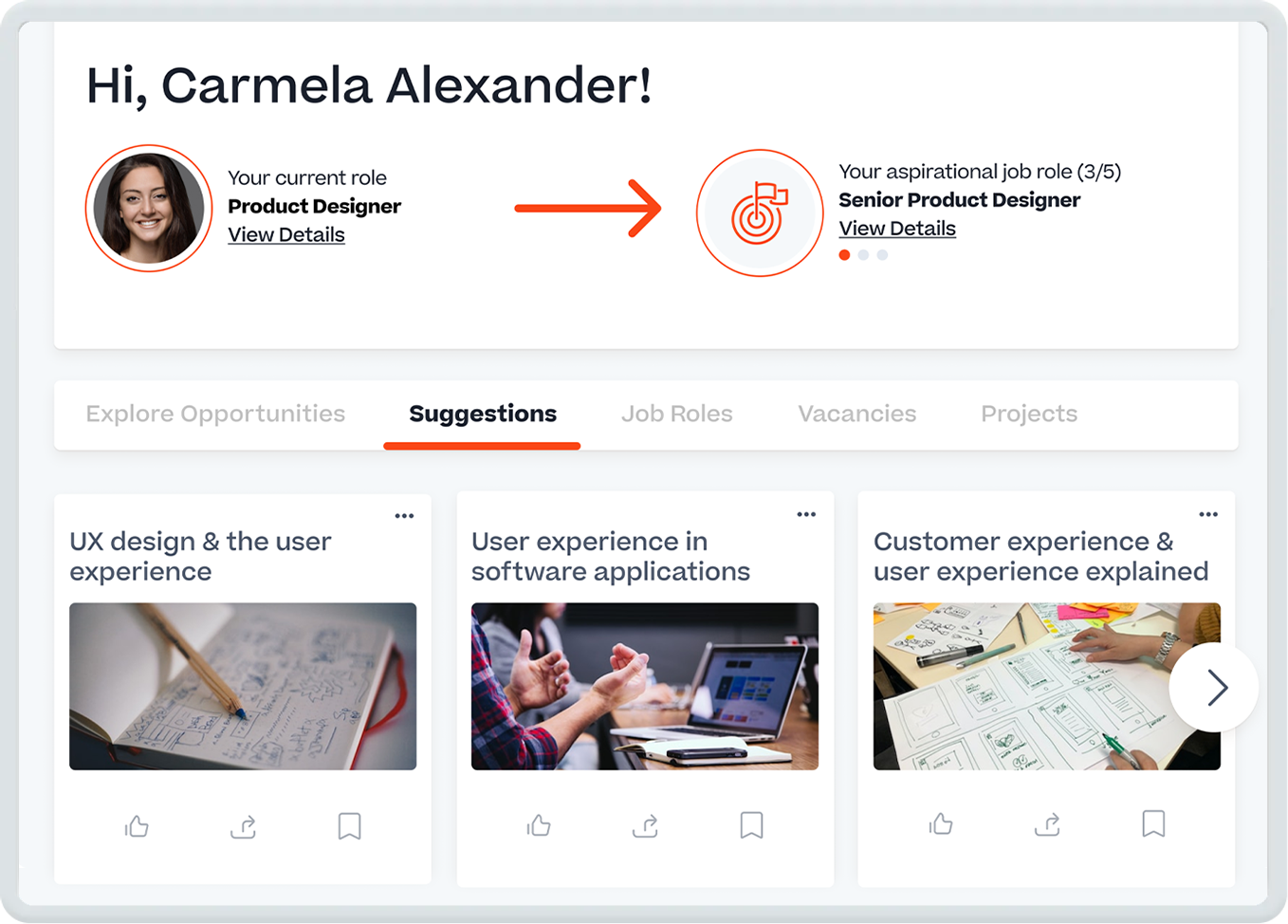

Grow your talent with personalized development

Provide your people with internal mobility opportunities and the skills they need to unlock their full potential.

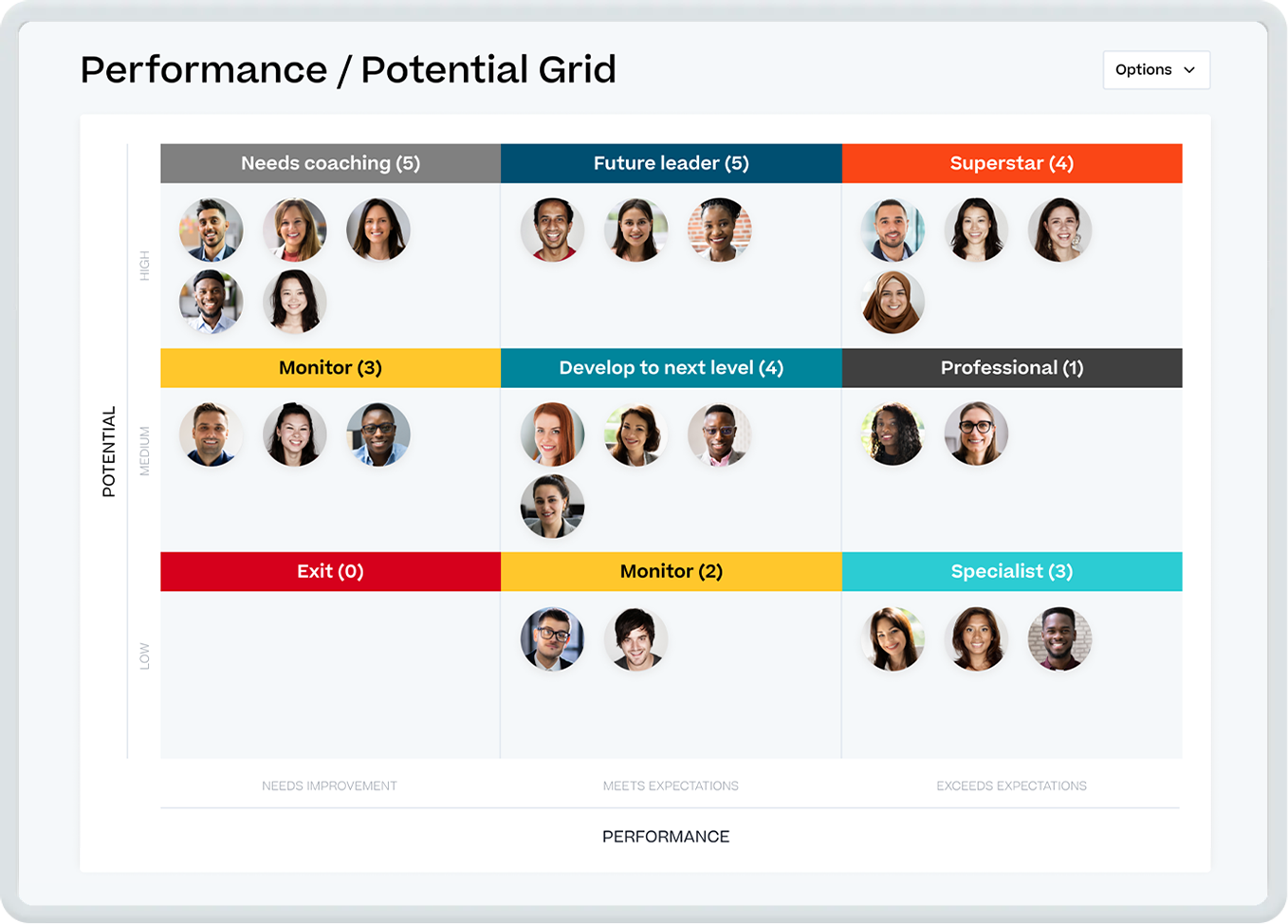

Optimize your organization with robust talent insights

Use comprehensive data about your organization to recognize high performers and develop your future leaders.

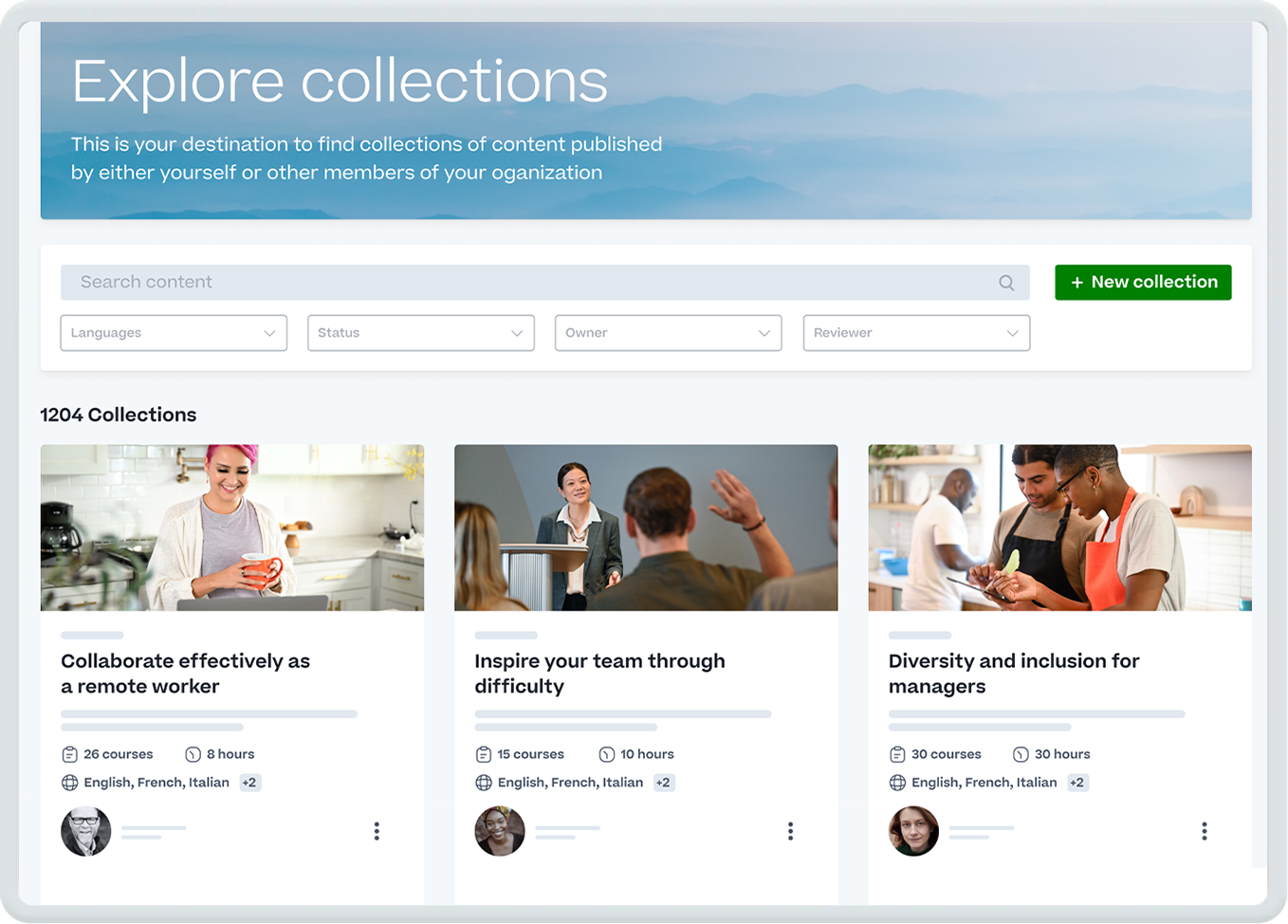

Engage your workforce with premium learning content

Provide your people with award-winning, curated learning content that engages them in their flow of work.

7,000+

customers in 186 countries choose Cornerstone

6x

undisputed leader by top analyst firms in 2023

30+

awards for outstanding product, leadership, and innovation

The most iconic brands choose Cornerstone

Having everything in one, easy-to-use platform that is accessible anytime has been a lifesaver for us. After enabling mobile learning, we saw usage rise ten-fold! Engagement is at an all-time high, and it’s still going up.”

You can always make more money, but you can’t make more time. Cornerstone creates efficiencies to apply their learning to other productive activities.”

More from Cornerstone

Want to keep learning? Explore our products, customer stories, and the latest industry insights.

Schedule a personalized 1:1

Talk to a Cornerstone expert about your organization’s unique people management needs.